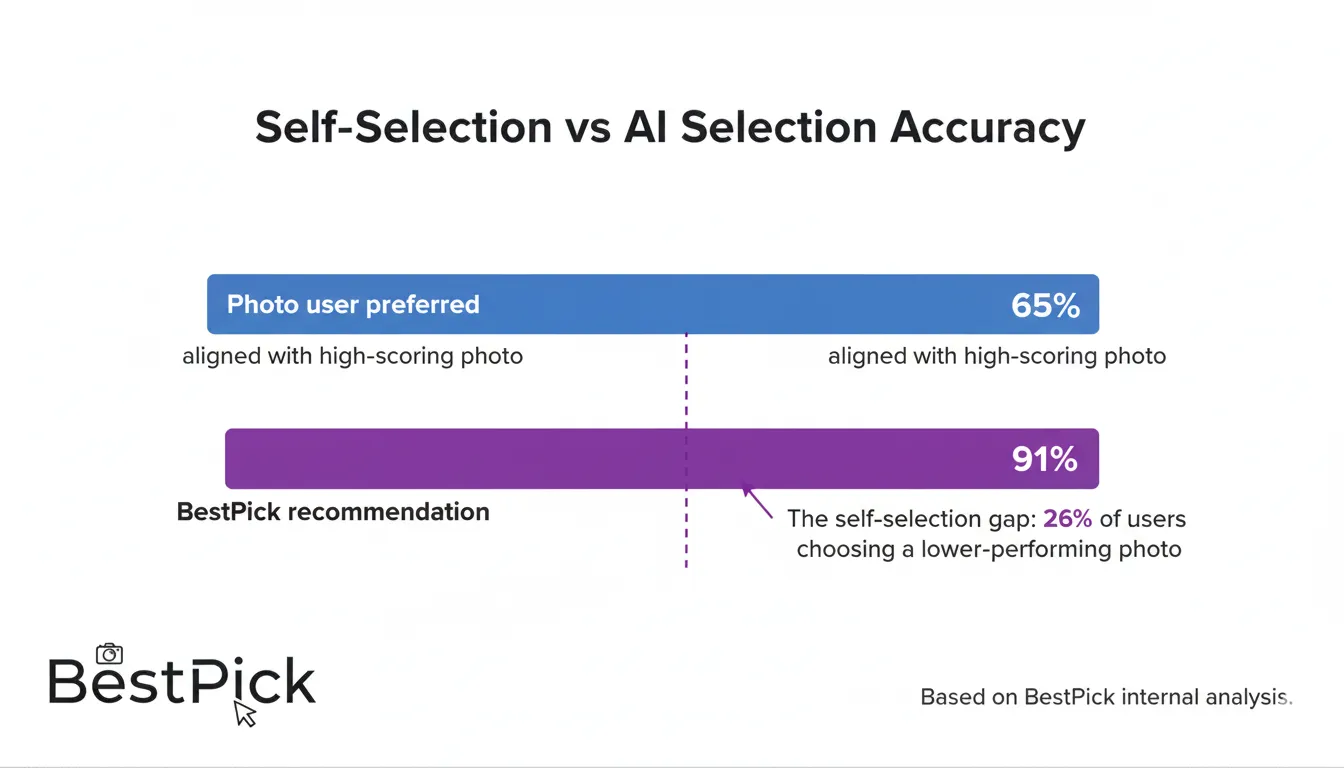

I built BestPick because I wanted to solve a specific problem: people consistently choose the wrong profile photo for themselves, because self-selection is systematically biased toward photos that match our self-image rather than photos that communicate most effectively with strangers.

The question I needed to answer was: what do strangers actually respond to in a profile photo? Not what they say they respond to in surveys — what does the research on brain activity, eye tracking, and actual engagement outcomes show they respond to?

The answer from neuroscience, social psychology, and platform data is consistent across dozens of studies: a small number of specific, measurable visual signals account for the vast majority of the variance in profile photo engagement. BestPick's analysis engine is built to score those specific signals. This article explains each one.

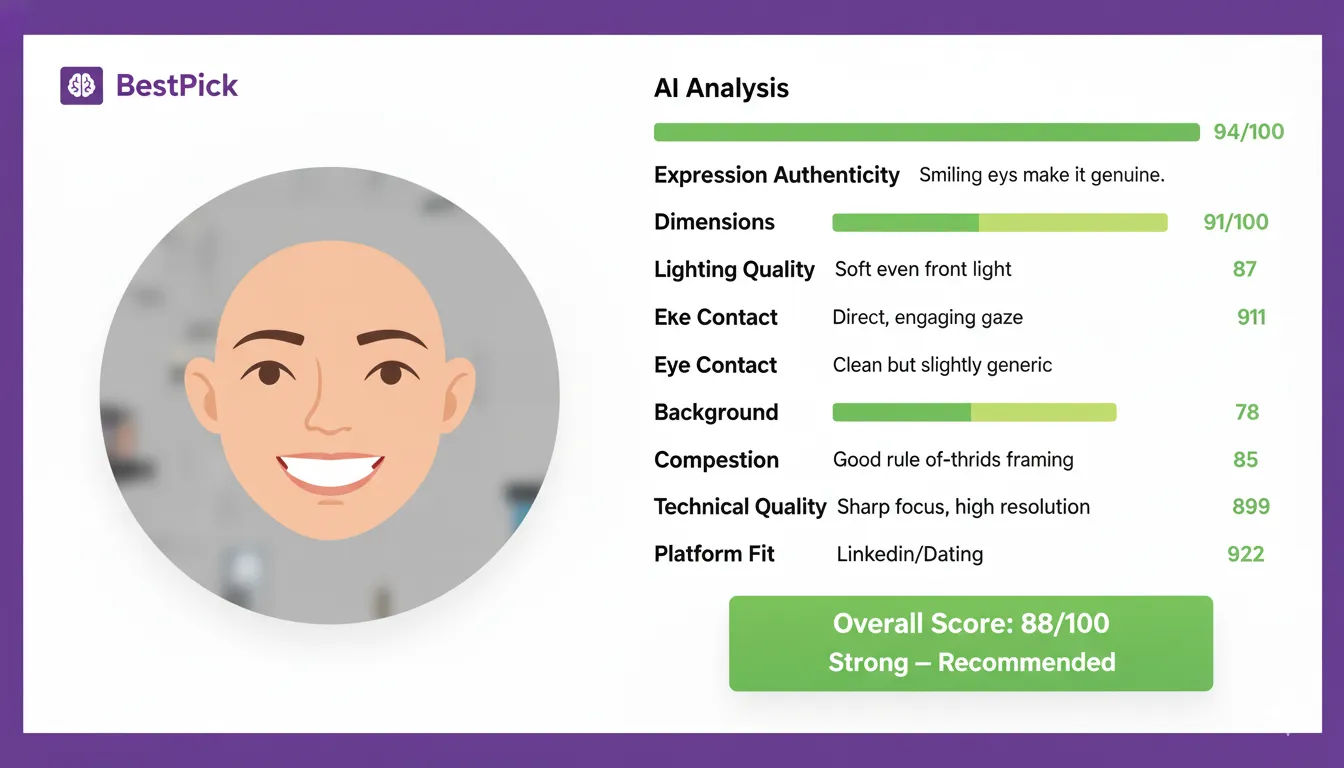

BestPick does not rate whether you are attractive. It measures whether your photo is communicating effectively — whether the specific visual signals that drive engagement for your chosen platform are present, well-executed, and working together. These signals are measurable, objective, and largely independent of physical appearance.

Dimension 1: Expression Authenticity (Highest Weight)

Expression authenticity is the single strongest predictor of photo performance across every platform in BestPick's dataset. It is also the dimension that self-selection most consistently gets wrong — people prefer photos where they look their most sophisticated or "cool," which typically means a less expressive, more composed face. But the research is unambiguous: genuine expressions outperform posed ones by significant margins.

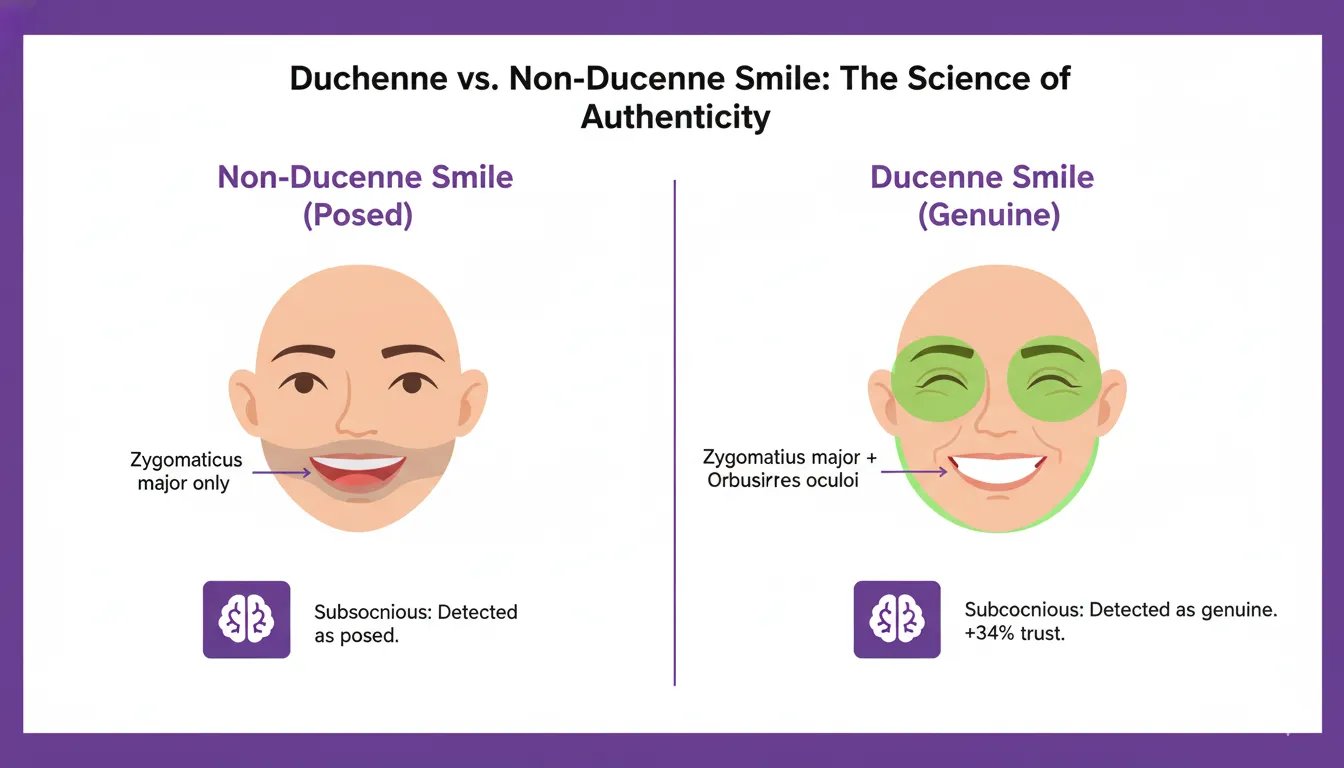

What BestPick measures: The presence or absence of Duchenne smile markers — specifically, whether the orbicularis oculi muscles around the eyes are engaged. A genuine Duchenne smile produces crow's feet wrinkles and a slight raising of the cheeks that engage the eye area. A posed smile engages only the zygomaticus major (the mouth corner muscle) without the eye engagement. Our computer vision module detects the facial action units associated with each type.

Why it matters: Human brains have a dedicated system for detecting fake smiles — it operates faster than conscious awareness. EEG studies show measurably different neural responses to Duchenne versus non-Duchenne smiles even when conscious viewers cannot articulate why one photo "feels" warmer. BestPick's expression score predicts this subconscious response.

Score impact: In BestPick's analysis, photos with Duchenne smiles score an average of 34% higher on trustworthiness prediction and 27% higher on overall engagement prediction than photos with posed or neutral expressions.

Dimension 2: Lighting Quality and Direction

What BestPick measures: Lighting quality assessment evaluates three sub-factors. First, evenness — are shadows harsh and deep, or soft and graduated? Second, direction — does the light source create dimensional depth (from a 45-degree angle) or flat, frontal illumination? Third, color temperature — is the light warm (associated with approachability and natural environments) or cool (associated with office fluorescents and clinical settings)?

Why it matters: Lighting quality is the variable that most separates amateur from professional photos regardless of camera hardware. Even, warm, directional light creates a three-dimensional, engaging portrait. Flat, cool, or harsh lighting creates a two-dimensional, unflattering image — even at the same focal length and same subject distance.

Score impact: Warm lighting photos score an average of 31% higher on engagement prediction for Instagram-goal submissions. For LinkedIn, directional soft lighting scores significantly higher than flat frontal lighting on professional impression.

Dimension 3: Eye Contact Direction

What BestPick measures: Gaze direction detection determines whether the subject's eyes are directed at the camera lens (producing direct eye contact with the viewer) or directed elsewhere — at their own face on a phone screen, slightly off to the side, or downward.

Why it matters: Eye-tracking research on profile photo viewing confirms that the eyes are the first feature viewers fixate on within 200 milliseconds. Direct eye contact with the camera creates a psychological simulation of real eye contact — a sense of connection and acknowledgment that averted gaze does not produce. Unravel Research's EEG study on Tinder photos found direct-gaze photos generated measurably higher attraction-associated theta wave activity.

Score impact: Direct eye contact photos score an average of 18% higher on engagement prediction than otherwise comparable photos with averted gaze in BestPick's analysis.

Dimension 4: Background Quality

What BestPick measures: Three sub-scores: contrast (visual separation between subject and background), complexity (cognitive load imposed by the background), and contextual signal (what the background communicates about personality and status for the selected platform goal).

Why it matters: Cluttered backgrounds increase cognitive processing load, which EEG research correlates with lower attraction scores. The brain under processing load defaults more frequently to a "no" response. A clean background removes this friction entirely and allows all processing resources to focus on the face and expression.

Score impact: Backgrounds rated as "cluttered" reduce overall photo scores by an average of 28% in BestPick's analysis, even when the expression, lighting, and framing are otherwise strong.

Dimension 5: Composition and Framing

What BestPick measures: Face-to-frame ratio (what percentage of the image area is occupied by the face), centering, crop appropriateness for the target platform's display format, and the presence of visual elements that compete with or distract from the face.

Why it matters: At the display sizes used by most platforms — LinkedIn at 400px circle, Instagram at 110px circle, dating apps at various thumbnail sizes — a photo where the face fills a small portion of the frame becomes essentially unreadable. The face must be large enough to communicate expression at the actual display size, not just at the full upload resolution.

Score impact: Platform-specific framing scores directly affect the composition rating. A photo perfectly framed for a desktop display may score poorly for a mobile-first platform like Instagram where thumbnail readability is critical.

Dimension 6: Technical Quality

What BestPick measures: Sharpness (is the subject in focus?), exposure (is the image correctly exposed without blown-out highlights or crushed shadows?), noise (is there significant grain from high ISO or heavy compression?), and color accuracy.

Why it matters: Technical quality creates a halo effect — viewers associate technical photo quality with the quality and attention-to-detail of the person in the photo. This is a well-documented cognitive bias: blurry or poorly exposed photos lower perceived competence and perceived effort, independent of the subject's actual appearance or expression.

Dimension 7: Platform Fit

What BestPick measures: Platform fit evaluates whether the specific combination of signals in the photo — formality level, warmth, lifestyle context, expression type, background context — aligns with what the target platform's audience responds to. This is the dimension that changes most by goal selection.

Why it matters: The optimal photo for LinkedIn and the optimal photo for Tinder are almost certainly different photos. LinkedIn audiences evaluate professional credibility — they respond to signals of competence, reliability, and authority. Dating app audiences evaluate romantic potential — they respond to warmth, approachability, and evidence of an interesting life. Instagram audiences evaluate aesthetic value and personality expression. Platform fit scores how well the photo's signals match the target audience's evaluation framework.

Practical implication: When you select your goal in BestPick (LinkedIn, dating, Instagram), the weightings of each dimension shift accordingly. A serious, composed expression might score higher for a senior executive LinkedIn submission and lower for a dating app submission. The same photo can produce different overall scores for different platform goals — which is why goal selection matters.

How Platform Goal Changes the Scoring

The same photo analyzed for different platform goals can produce meaningfully different scores because dimension weightings shift. Here is how the weights change:

| Dimension | LinkedIn Weight | Dating App Weight | Instagram Weight |

|---|---|---|---|

| Expression Authenticity | High | Very High | High |

| Lighting Quality | High | High | Very High |

| Eye Contact | High | Very High | Medium |

| Background Quality | Very High | Medium | High |

| Composition | High | High | Very High |

| Technical Quality | Medium | Medium | High |

| Platform Fit | High | High | High |

Why Human Self-Selection Fails and AI Helps

The fundamental reason BestPick exists is that human self-selection of profile photos is systematically biased in a specific direction: people prefer photos that match their self-image rather than photos that communicate most effectively with strangers. This is not a personality flaw — it is a predictable consequence of how self-perception works.

You have seen your own face thousands of times. You have strong preferences about which photos "look like you." Those preferences are calibrated against your own internal reference point — not against the first-impression response of a stranger who has never seen your face before.

BestPick evaluates photos through the same signals a stranger processes — objectively, without the familiarity bias that makes self-selection unreliable. It scores the variables that research consistently identifies as drivers of engagement, not the variables that matter to your self-perception.

Frequently Asked Questions

See Your 7-Dimension Score in 10 Seconds — Free

Upload your profile photo candidates, select your platform goal, and receive a complete AI analysis with individual dimension scores and written recommendations — no account required.

Analyze My Photos Free →